Squinting into the blue-gray light of yet another computer terminal, John McCarthy looked as though he were suffering from eyestrain, or from one too many software demonstrations. He was sitting in the industrial exhibition hall at the annual convention of the American Association for Artificial Intelligence, where companies with names like Teknowledge and IntelliCorp were demonstrating some of the first commercial fruits of a field he had done much to create. McCarthy, a Stanford University computer scientist, had just finished serving a year as president of AAAI, and earlier that day he had addressed an auditorium filled with people who had come to hear him describe his 30-year-old effort to instill in computers what humans call common sense. For many, the speech was the climax of the convention, and now, in its aftermath, he sat wearily rubbing his eyes, half listening, as an employee of a firm called Inference Corporation explained the network of lines and boxes cast upon the screen by a program ambitiously christened ART, the Automated Reasoning Tool.

In the English that computer scientists speak, ART is known as a “knowledge engineering environment,” an aid to designing a complex new breed of programs called expert systems, which automatically make decisions in such fields as financial planning, medical diagnosis, geological exploration, and microelectronic circuit design.

As anyone who has tried to write even the simplest program knows, the world inside a computer can be an inhospitable place, unforgiving of the most innocent human foible. Drop a comma or misnumber a line and a program crashes, leaving only a string of incomprehensible “error messages” to explain what went wrong. Once loaded into a computer, a program like ART organizes the tabula rasa of silicon chips into more habitable surroundings. By automatically taking care of some of the messy and tedious details of programming, ART frees users to concentrate on the big picture. The result, according to Inference Corporation’s colorful brochure, is a more efficient means of designing programs that capture the “concepts, facts, and beliefs” of human expertise.

“The computer is no longer just a fast number cruncher,” the company’s enthusiastic copywriter had written. “[I]t is now possible to program human knowledge and experience into a computer…Artificial intelligence has finally come of age.”

This was a common refrain during the 1984 convention of AAAI, an organization of scientists who believe that intelligence can be divorced from it’s biological substrate and planted carefully in machines. Once an arcane discipline in the upper stratosphere of computer science, AI, as initiates call it, has recently captured the imagination of industrialists and venture capitalists who hope to profit from the attempt to simulate the workings of the mind. But in the long journey from the hieroglyphic formulas of technical papers to the hyperbolic prose of publicists, things are sometimes lost, or rather added, in translation. Most of the scientists had come to the convention, which was held in early August at the Austin campus of the University of Texas, to meet old friends and discuss the small advances they’d made, but some of the companies that had staked out space in the exhibition hall acted as though the age-old dream of creating intelligent companions had already been realized.

“We’ve built a better brain,” exclaimed a brochure for one of ART’s competitors, TIMM, The Intelligent Machine Model: “Expert systems reduce waiting time, staffing requirements and bottlenecks caused by the limited availability of experts. Also, expert systems don’t get sick, resign, or take early retirement.” Other companies, such as IBM, Xerox, Texas Instruments, and Digital Equipment Corporation, were more conservative in their pronouncements. But the amplified voices of their salesmen, demonstrating various wares, sounded at times like carnival barkers, or prophets proclaiming a new age.

“We’ve built a better brain,” exclaimed a brochure for one of ART’s competitors, TIMM, The Intelligent Machine Model: “Expert systems reduce waiting time, staffing requirements and bottlenecks caused by the limited availability of experts. Also, expert systems don’t get sick, resign, or take early retirement.” Other companies, such as IBM, Xerox, Texas Instruments, and Digital Equipment Corporation, were more conservative in their pronouncements. But the amplified voices of their salesmen, demonstrating various wares, sounded at times like carnival barkers, or prophets proclaiming a new age.

As the scientists moved from one technical session to the next, gathering in groups to talk with colleagues, they had to dodge corporate recruiters–”the people in suits and ties chasing the people with the bushy hair,” as one researcher bemusedly called them. AI has always attracted a good share of eccentrics, but by the time of the Austin conference the number of people with backpacks, beards, and pony tails was overwhelmed by hordes of interlopers with name tags indicating how commercially respectable the field had become: IT&T, Martin Marietta, Rockwell International, Mobil, Amoco, the U.S. Army, Air Force, Navy, and Coast Guard, the U.S. Missile Command. While some of the visitors were there as corporate headhunters, most had paid $175 a session for beginning tutorials, which AAAT offered as a way to help pay its operating expenses.

A Conscious Machine?

To ensure a good draw, the sessions were taught by such AT luminaries as Marvin Minsky of MIT. Intelligence, Minsky told his audience, is not so mysterious. It is simply “the set of things we don’t understand yet about what the mind does…Whenever we understand something, we become contemptuous of it. I think the same thing will happen to intelligence in the next ten or a thousand years.” In 10,000 years, he said, we’ll look back at our romantic notion of the elusiveness of the mind and laugh. Can a machine be conscious? he asked his audience. Can it know and feel the meaning of the things it is dealing with? Minsky clearly thought that it could. Consciousness is not any more mysterious than intelligence, he said. “I don’t think it’s subtle at all.” It’s just that in thinking about thinking, consciousness “steps on itself and squashes itself.” If we can learn to avoid such self-referential vertigo, he believed, eventually consciousness will be mechanized.

The figure of the very philosophical Minsky addressing an auditorium of corporate acolytes crystallized the aura of weird juxtaposition that surrounded AAAI-84. The minority of AI researchers were there to attend presentations of technical papers on such topics as speech recognition and language understanding (hearing Graeme Hirst of the University of Toronto on “A Semantic Process for Syntactic Disambiguation,” or David L. Waltz and Jordan R Pollack of the University of Illinois on “Phenomenologically Plausible Parsing”). They participated in panel discussion on, for example, “The Management of Uncertainty in Intelligent Systems” (in dealing with the fact that real-world information is incomplete, inexact, and often incorrect should one use fuzzy logic, standard probability theory, evidential reasoning?). While the scientists discussed the subtleties and difficulties that naturally surround an enterprise as ambitious as artificial intelligence, the emissaries from the corporate and military worlds were drawn to sessions like “AI in the Marketplace,” or “DARPA’s Strategic Computing Project,” an effort by the Defense Department’s Advanced Research Projects Agency to develop intelligent self-guided tanks and expert systems for “real-time battle management.”

As he sat amidst the din, McCarthy seemed unimpressed. He closed his eyes, leaned forward, and rested his head on his hands, which were clasped in front of him like those of a man in prayer. Then he withdrew from the noise around him, into the more familiar environment of his own mind. As much as any person alive, he was appreciative of how far the field had come, but he was also aware how very basic were the problems yet to be solved. “Things have been slower than I’d hoped,” he said, in an interview before the conference, “but perhaps not slower than I expected. I expected that these problems were difficult. I didn’t think they were almost solved.” It is possible, he said, that one day soon “somebody may get a really brilliant idea. It may be that AI is a problem that will fall to brilliance so that all we lack is one Einstein.” But he accepts that, most likely, he and his field are at the beginning of a long slow progression. “I think this is one of the difficult sciences like genetics, and it’s conceivable that it could take just as long to get to the bottom of it. But of course the goal is that one should be able to make computer programs that can do anything intellectual that human beings can.”

From the time Gregor Mendel performed the first genetic experiments on plants in a monastery garden to the discovery by James Watson and Francis Crick of the double helical structure of DNA, approximately a century passed. To McCarthy it wasn’t surprising that it might take as long to understand the nature of the intelligence that had deciphered the chemical structure of life. Like his colleagues who had come to Austin, he believed that the key to unlocking the mysteries of thought would be the digital computer.

LISP Language

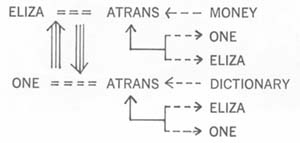

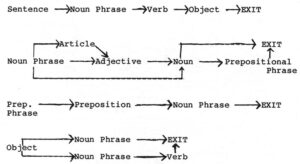

It was McCarthy who, in 1956, coined the term artificial intelligence and later developed the language, Lisp, in which most AI programs are written. More conventional computer languages, such as Fortran, Cobol, Pascal, and Basic, are designed to manipulate numbers and words; they perform high-speed mathematical calculations and sort and collate lists of employees, customers, items in inventory, airline reservation information–the stuff of data processing. Lisp, however, was written to handle a loftier variety of symbols–those representing rules and concepts that many AI researchers believe are similar to those with which we think.

Throughout the 1960s and 1970s this new kind of computing–symbolic processing, it was often called–was limited largely to the AI labs at universities such as Stanford, the Massachusetts Institute of Technology, and Pittsburgh’s Carnegie-Mellon, where researchers used computers to study the nature of intelligence. Unlike the psychologists and philosophers who had preceded them in this age-old pastime of thinking about thinking, the AI researchers tested their theories by describing them in Lisp and seeing what happened when they ran the resulting programs on a machine. Since the scientists assumed that intelligence was a kind of mechanism–a subtle and complex processing of symbols–they believed that, under the influence of Lisp, computers eventually would not only model human thought–as they already modeled tropical storms, new aircraft designs, or thermonuclear wars–but that they would actually engage in the act of thinking.

Lisp proved to be a powerful invention, admired by computer scientists for the elegance of its design. With most languages, a programmer must decide in advance how much memory his creations will require and cordon it off early in the program. With Lisp such strict preplanning is unnecessary. Memory is automatically captured and surrendered as needed, as the program is run, allowing for a kind of spontaneity and fluidity necessary for mimicking the nuances of thought. Also, in Lisp both the program and the data it processes are written in the same format, as words surrounded by parentheses. To the computer, data and instructions look exactly the same, so one program can provide the grist for another’s mill: programs can read programs and write programs–they can even inspect and modify themselves, suggesting the possibility of software that not only learns but is aware of its own existence.

For these and other reasons, Lisp quickly became regarded as a most receptive vehicle for artificial intelligence programming. Like human languages, it has evolved to meet the increasing demands of its users. Under the tutelage of McCarthy and his colleagues, Lisp has gone on to beget MacLisp, InterLisp, ZetaLisp, FranzLisp (in honor of the composer who is similarly named)–a number of dialects that by the time of the Austin conference were being merged into a babelesque effort called CommonLisp, which it was hoped, would become the lingua franca of the field.

To better take advantage of this ever more fluent tongue, a group of scientists at MIT began, in 1973, to design a high-powered personal computer especially for writing and running AI programs. During the early 1980s, these Lisp machines became a common sight in university AI labs as companies such as Symbolics, Xerox, and Lisp Machine, Inc., began to develop and sell the product. But these purveyors of symbolic processing hardware envisioned a potentially more widespread and lucrative market: the corporations whose interest had been piqued by reports that AI, in the form of expert systems, was ready for export from the universities to the marketplace.

“I would get several phone calls a week consisting of three types of questions,” said Stanford computer science professor Edward Feigenbaum. “‘Can I send a guy out to your place for a year?’ ‘Would you do a project for us?’ And, ‘Do you guys have any software we can have?’ The answer to the first question was, ‘No way.’ The answer to the second question was that we only do contracts that involve basic research–we’re not a job shop. The answer to the third question was, ‘Yes we do. Some is available in the public domain, some from the Stanford Office of Technology Licensing.’ ” After the calls kept coming, Feigenbaum realized, “This sounds like a company.” In 1981, he and several colleagues started IntelliGenetics (later renamed IntelliCorp) and Teknowledge to provide training, consulting, and software aids to companies interested in developing expert systems. They had little trouble attracting investors.

“The venture capital community is always looking for the next way to stimulate technology so that big rewards will be reaped,” Feigenbaum explained. “In 1980 and ’81 it was biotechnology. Now it is AI.”

In 1982, Business Week announced that the world was at “the threshold of a second computer age.” Two years later, just before the AAAI convention, the magazine ran a cover story headlined “AI: It’s Here.” Even AAAI joined the excitement, distributing bumper stickers that read: “Artificial Intelligence: It’s for Real.” But to many of the scientists who attended the Austin meeting, such enthusiasm seemed premature.

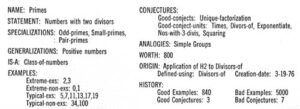

As promising as expert systems were, they were based on a fairly superficial form of intelligence, consisting largely of long lists of prepackaged “if-then” rules, gleaned from interviews with human experts. For example, one of the rules used by Mycin, an expert system on bacterial infections, read like this: “If (1) the infection is primary-bacteremia, and (2) the site of the culture is one of the sterile sites, and (3) the suspected portal entry of the organism is the gastrointestinal tract, then there is suggestive evidence that the identity of the organism is bacteroides.” Knowledge of this sort is called heuristic–while not infallible, it guides us toward judgments that experience tells us are likely to be true.

Heuristic programming clearly was a powerful new software technology, but some critics within the field doubted that it deserved the name artificial intelligence. Unlike a human expert, Mycin didn’t contain an internal model of the human body; Prospector, an expert geological exploration, didn’t know what sedimentation, metamorphism, or vulcanism were. And, as was clear from McCarthy’s presidential address, none of these programs contained anything that could be called common sense.

McCarthy, who was trained as a mathematician, believed that the key to artificial intelligence was in producing a formal system in which the vast number of facts that comprise everyday existence–if a thing is put in water it will get wet, a glass will shatter if it falls–can be generated from a finite set of axioms using a special logic he was inventing. McCarthy believed that completely describing this common sense knowledge was a more subtle matter than (as Feigenbaum has suggested) compiling millions of if-then rules. McCarthy hoped to devise a system-with, perhaps, no more than a 100,000 rules–in which the seemingly infinite range of situations that an intelligent being might encounter are implicit, just as all of literature exists, in a dormant sense, in the rules of grammar and spelling and the 26 letters of the alphabet. Since 1956, McCarthy had been working quietly on his own, communicating mostly with a handful of colleagues at various universities who had taken upon themselves this task of trying to formalize the nature of reality. As with Lisp, he hoped the system he would eventually create would have an elegance and an internal consistency–a mathematical beauty–all its own. Although he anticipated a day when artificial intelligence communicated on an equal (or superior) basis with mankind, he wasn’t particularly interested in immediate applications.

According to an oft-quoted heuristic, it takes about ten years for an idea to move from the laboratory to the marketplace. The theories behind the expert systems technology that was being displayed at the Austin convention were well over a decade old. But what was history to the scientists was news to the business world. While the attendance at AAAI’s first conference in 1980 was only about 800, AAAT-84 attracted more than 3,000 conventioneers, two thirds of them from outside the academic community.

All fields, of course, have their theoretical and applied sides, but some of the scientists at Austin were worried that the allure of commercialization was distracting their colleagues from more important concerns. AI is in a precarious position. Minsky estimates that there are less than a hundred people doing theoretical work. Each year about 25 to 30 students receive PhDs with specialties in artificial intelligence, said Daniel Bobrow, editor of the academic journal Artificial Intelligence and a scientist at Xerox’s Palo Alto Research Center. “Bell Labs would hire them all if they could. So would IBM. So who will go to the universities? Some people claim that we’re eating our own seed corn.”

At the same time that the quality and amount of basic research is being threatened by the competition of industry, expectations are very high–those of the businesses that are investing in AI, and the public, which is intrigued by the futuristic scenarios reported in the press. In fact, a growing number of researchers are so concerned about the potentially disastrous effects of this combination of heightened hopes, hoopla, and inadequate theoretical work, that they anticipate an “AI winter,” when a disappointed business community and military, convinced that artificial intelligence is a boondoggle, stops funding research. At the closing session of the Austin conference, “The ‘Dark Ages’ of AI–Can We Avoid Them or Survive Them?,” Roger Schank, a prominent AI researcher from Yale University, made a rhetorical plea:

“Remember AI?…the first conference and the second conference. We used to sit and argue about things, not whether or not [our companies] should go public…We as an AI community have forgotten that we’re here to do science, and that we are nowhere near the solution. We used to sit and fight about these things in public, now we all sit and talk [as though] it’s all solved and give nice, slick talks with pretty slides and pictures on them. But I’m very concerned about the fact that people don’t want to do science anymore. It is the least appealing job on the market right now…It’s easier to go into a startup company and build products, it’s easier to go into a big company and have a little respite and do some contract work, it’s easier to do all those things than to go into a university and try to organize an AI lab…and sit there on your own trying to do science. It’s difficult. But I can say that if we don’t do that we’ll find that we are in the dark ages of AI.”

As founder of his own company, Cognitive Systems Inc., Schank was hardly one to harp about commercialization. He recognized the irony of his role as pure researcher–his language and learning work is as seminal as it is controversial–and entrepreneur. That, he said, is part of the “schizophrenic” nature of the field:

“It is incumbent upon AI, because we’ve promised so much, to produce–we must produce working systems. Some of you must devote yourself to doing that, and part of me devotes myself to doing that. It is also the case that some of you had better commit to doing science, and part of me commits to doing that. And if it turns out that our AI conference isn’t the place to discuss science then we had better start finding a place where we can.”

©1985 George Johnson

George Johnson, a freelance writer, is reporting on the quest to build computers smarter than humans.